Web3 Interview Signals and Calibration Hub: How Hiring Teams Read Interviews, Misread Signals, and Verify Real Readiness

Most Web3 interview mistakes do not start with bad candidates. They start with bad interpretation.

A candidate sounds fluent, so the panel assumes depth. Another pauses, asks clarifying questions, and thinks carefully, so the panel assumes weakness. Someone can name the right tools, ecosystems, and smart contract risks, so the interview feels strong. Someone else explains trade-offs honestly but without performance language, and gets marked as uncertain.

That is where many Web3 hiring processes begin to drift.

Teams confuse fluency with judgment, speed with depth, and polished answers with real execution readiness. Candidates also misread the room. They prepare for surface-level questions, over-optimize for sounding sharp, and underestimate how much strong interview performance depends on explanation quality, ownership clarity, debugging maturity, and proof that stands up to follow-up.

This hub exists for that gap.

It is not a generic Web3 interview prep page. It is a calibration hub for understanding what interviews actually reveal, what they fail to reveal, and how stronger teams connect interview impressions with proof before making shortlist decisions.

For hiring teams trying to reduce weak-fit interviews

If your Web3 interviews feel inconsistent, noisy, or too dependent on who is in the room, AOB offers two practical ways to improve hiring quality:

Blockchain JD Review

Get a sharper, role-aligned job description with clearer proof expectations, better screening language, and less candidate mismatch.

Blockchain Job Description Review Service for Web3 Hiring Teams | ArtofBlockchain

Post a Web3 Job on AOB

Reach a focused blockchain audience already engaging with jobs, hiring signals, interview prep, and proof-based career discussions.

Job Board | ArtofBlockchain

Who this hub is for

This hub is for founders hiring blockchain talent without a mature interview process yet.

It is for hiring managers trying to reduce false positives and expensive misreads.

It is for senior engineers who interview candidates and want sharper evaluation judgment.

It is for recruiters who need clearer signal language before passing candidates downstream.

It is also for candidates who want to understand what strong Web3 interview performance actually looks like from the evaluator side.

Quick map of this hub

Interview signal interpretation

Use this lane if the real problem is not “more interview questions,” but how the panel reads answers. In Web3 interviews, fluency, speed, confidence, and tool vocabulary can easily be mistaken for readiness.

Role context and calibration

Use this lane when different interviewers are judging the same answer differently. A strong answer for a junior Solidity developer, protocol engineer, smart contract auditor, QA lead, or DevRel candidate will not look identical.

Start here:

Role-Specific Hiring Playbooks | ArtofBlockchain

Technical depth under interview pressure

Use this lane when the interview is testing whether the candidate can reason through EVM behavior, consensus trade-offs, debugging, gas, storage, tooling, or production constraints without hiding behind memorized definitions.

Proof after the interview

Use this lane when the interview sounded promising, but the team still needs evidence. The question becomes: can the candidate connect their answers to GitHub work, architecture notes, debugging trails, test design, audit reasoning, or portfolio artifacts?

Start here:

Recruiters: how do you verify real blockchain experience before the interview? | ArtofBlockchain

JD and process clarity before interviews

Use this lane if interviews feel noisy because the role itself was not framed clearly. Weak ownership language, vague proof expectations, unclear must-have skills, and missing interview logic usually create weak-fit pipelines before the first call happens.

Start here:

Web3 JD Review for Teams Attracting Weak-Fit Blockchain Applicants | ArtofBlockchain

What this hub covers

This hub covers interview calibration in Web3:

How reasoning appears in interviews

How debugging behavior should be interpreted

How articulation changes interviewer's confidence

Where role context changes what “good” sounds like

How interview signals should be checked against proof, portfolio, and readable evidence

How weak role framing upstream creates noisy interviews downstream

What this hub is not

This is not a broad “how to prepare for any Web3 interview” guide.

For direct candidate preparation, use:

Smart Contract Interview Prep: Solidity, Security, Debugging, Take-Home Tests & Hiring Signals | ArtofBlockchain

This is not the main hiring-thesis page for AOB.

For the broader evaluator lens, use:

Web3 Hiring Signals | ArtofBlockchain

This is not the role-by-role expectations hub.

For role-specific evaluation logic, use:

Role-Specific Hiring Playbooks | ArtofBlockchain

This is not the main portfolio or proof-artifact hub.

For proof presentation and portfolio structure, use:

The Smart Contract Portfolio That Shows How You Think | ArtofBlockchain

Start here based on your situation

If your hiring panel keeps disagreeing after interviews

Your problem is probably not candidate quality first. It is evaluation drift. Different interviewers are rewarding different things.

Go next to:

Role-Specific Hiring Playbooks | ArtofBlockchain

If you are unsure whether an interview showed real depth or polished recall

You need interview-to-proof calibration, not more trivia rounds.

Go next to:

Recruiters: how do you verify real blockchain experience before the interview? | ArtofBlockchain

If your interviews attract too many weak-fit applicants

The problem may begin before screening, inside the JD itself. If ownership, proof expectations, and role scope are vague, interviews get noisy fast.

Go next to:

Blockchain Job Description Review Service for Web3 Hiring Teams | ArtofBlockchain

Web3 Hiring Signals | ArtofBlockchain

If the role is already live and you want better visibility among blockchain candidates

Use a focused jobs ecosystem rather than relying solely on broad reach.

Go next to:

Job Board | ArtofBlockchain

If you are a candidate preparing for smart contract interviews

Do not only practice answers. Practice explanation, debugging, trade-off reasoning, and proof walkthroughs.

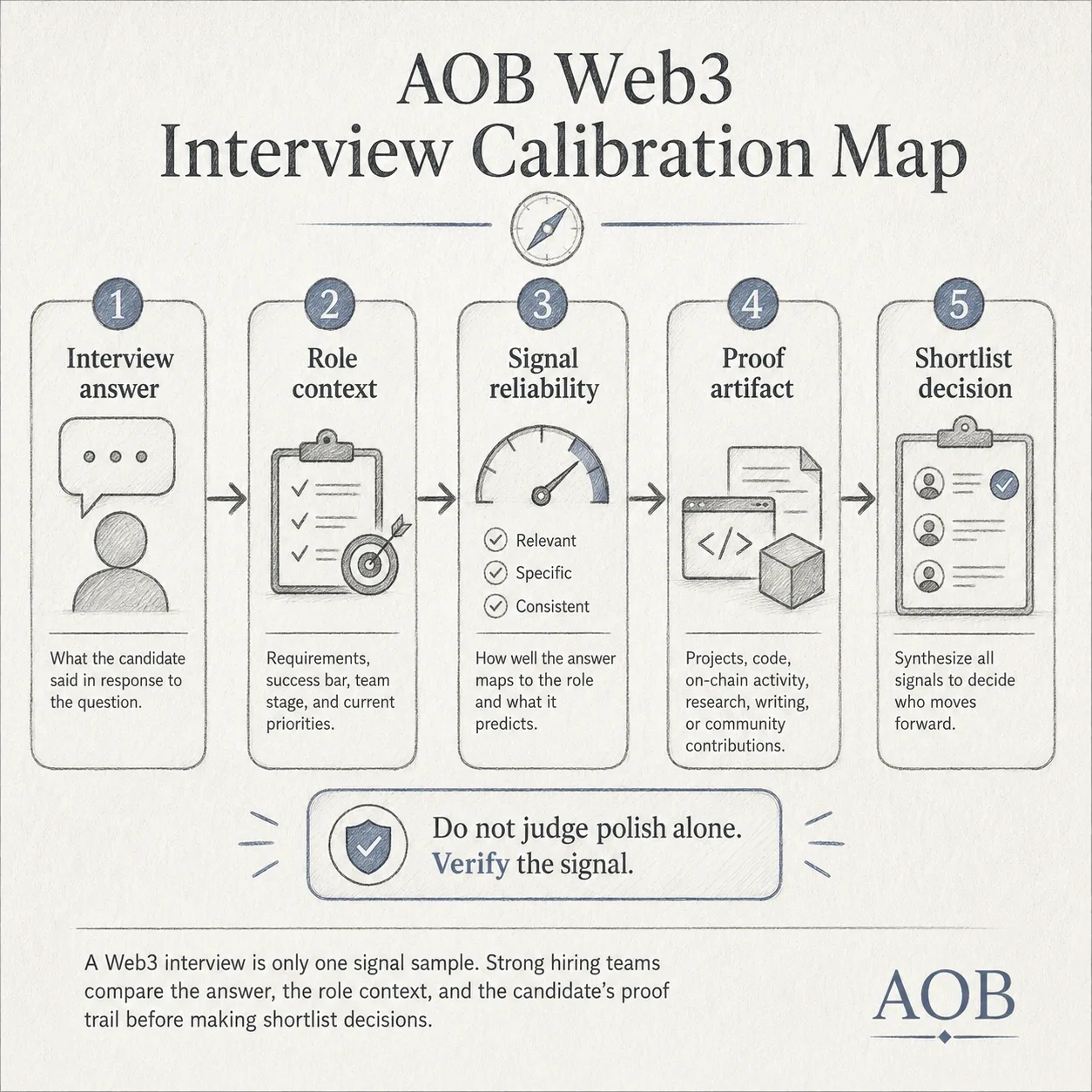

Core framework: the AOB interview calibration model

A useful Web3 interview should not be treated as a final verdict. It should be treated as a signal sample.

Layer 1: Interpretation

What did the candidate actually show?

Not “did they say the expected words?”

Ask:

Did they reason from first principles or pattern-match from memory?

Did they clarify assumptions before answering?

Did they handle trade-offs honestly, or chase the neatest answer?

Did they expose how they think when the path was not obvious?

Layer 2: Context

For what role would that answer count as strong?

A good answer for a junior Solidity candidate is not the same as a good answer for a protocol engineer, security engineer, QA lead, or solutions-facing Web3 role.

This is where many panels break. They use one interview taste across very different job types.

Use role context before making signal judgments.

Relevant next hub:

Role-Specific Hiring Playbooks | ArtofBlockchain

Layer 3: Reliability

Was the signal stable, or was it driven by style?

Some candidates speak fast and look strong while hiding shallow reasoning.

Others sound cautious but are actually more reliable under ambiguity.

A single interview answer can be distorted by stress, wording, and interviewer bias.

That is why confidence alone is a weak hiring signal.

Layer 4: Verification

What proof outside the interview supports or challenges the interview impression?

Can the candidate explain an architecture decision clearly?

Can they show a debugging trail, README, incident note, test design, benchmark note,audit thought process, or scoped ownership example?

The best hiring decisions happen when interviews and artifacts point in the same direction.

Relevant next pages:

How to Explain Your Smart Contract Architecture Decisions Without Sounding Vague or Theoretical | ArtofBlockchain

The Smart Contract Portfolio That Shows How You Think | ArtofBlockchain

Recruiters: how do you verify real blockchain experience before the interview? | ArtofBlockchain

Layer 5: Shortlist decision

The real question is not “was this person impressive?”

It is:

Would this person be readable, trustworthy, and role-aligned enough to move forward with lower execution risk?

That is the bridge between interview performance and hiring signal.

Tools, concepts, and systems that matter here

Reasoning interviews

These are useful when you want to understand how a candidate handles constraints, trade-offs, and changing assumptions.

Use:

I’m preparing for a system design interview. How do you explain blockchain consensus trade-offs without going too deep? | ArtofBlockchain

Deep technical interpretation

These are useful when the role depends on EVM reasoning, execution awareness, gas behavior, storage understanding, or debugging maturity.

Use:

US Web3 startup interview: I blanked on EVM gas (SSTORE/SLOAD, warm vs cold, slot packing). What’s the mental model engineers use in real contracts? | ArtofBloc

Proof verification

These help move from interview impression to evidence review.

Use:

Recruiters: how do you verify real blockchain experience before the interview? | ArtofBlockchain

Articulation and explanation systems

These matter when a candidate may have done solid work but cannot make ownership legible in the room.

Discussion and article clusters

Interview interpretation and evaluator judgment

Web3 Hiring Signals | ArtofBlockchainSmart contract interview reasoning

Smart Contract Interview Prep: Solidity, Security, Debugging, Take-Home Tests & Hiring Signals | ArtofBlockchainDebugging, tools, and production thinking

Solidity Debugging & Tooling Hub: Reverts, Trace Debugging, Hardhat, Foundry, Fork Tests, and Incident Proof | ArtofBlockchainhttps://artofblockchain.club/discussion/debugging-tooling-production-engineeri

Proof, portfolio, and readable evidence

The Smart Contract Portfolio That Shows How You Think | ArtofBlockchain

Recruiter screening and shortlist logic

Recruiters: how do you verify real blockchain experience before the interview? | ArtofBlockchain

Blockchain Job Description Review Service for Web3 Hiring Teams | ArtofBlockchain

Hiring signal bridge

In Web3, interviews are not useful because they produce perfect certainty. They are useful because they reveal how much uncertainty remains.

A strong candidate is not only someone who answers well. A strong candidate becomes easier to verify after the interview. Their explanations connect to artifacts. Their trade-offs sound consistent with the systems they claim to have touched. Their debugging language feels lived-in. Their ownership survives follow-up questions.

That is why interview calibration matters to hiring quality.

Without calibration, teams over-reward polish, under-reward readable substance, and reject candidates who may actually be safer long-term bets. With calibration, interviews become one part of a proof-based hiring flow instead of a standalone gate.

If you want the broader evaluator thesis behind that approach, go next to:

Web3 Hiring Signals | ArtofBlockchain

Need better signal before interviews even begin?

Many interview problems start earlier than the interview itself. Weak-fit applicants, unclear evaluation rounds, vague ownership expectations, and inconsistent panel decisions often trace back to poor role framing.

AOB’s Blockchain JD Review is built for hiring teams that want:

Clearer proof expectations

Sharper role alignment

Better shortlist quality

Less noise in screening

If your hiring team is struggling to judge candidates consistently, the problem may not be the candidate pool alone. Sometimes the JD, interview flow, proof expectations, and shortlisting criteria are not aligned.

For hiring teams, AOB can help with clearer role framing here:

Web3 JD Review for Teams Attracting Weak-Fit Blockchain Applicants | ArtofBlockchain

If the role is already defined and you want to reach Web3 candidates, you can also post it here:

Post a Web3 Job | Blockchain Job Board for Founders, Recruiters & Hiring Teams | ArtofBlockchain

Proof layer: what good proof looks like after the interview

Good proof after an interview usually looks like:

A repo with a clear README and scoped ownership

A project explanation that names trade-offs, constraints, and why choices were made

A debugging story that shows how the candidate isolated the issue and verified the fix

A testing or review artifact that shows how correctness was checked

An architecture explanation that sounds precise without becoming vague or inflated

A portfolio page that makes the work readable for non-identical roles

Useful pages for this layer:

The Smart Contract Portfolio That Shows How You Think | ArtofBlockchain

Common mistakes

Turning this hub into generic interview tips

Treating confidence as depth

Using one interview taste across every role

Letting tool preference stand in for engineering judgment

Making shortlist decisions without checking proof

Keeping JD expectations vague and then blaming the interview for noisy outcomes

Letting hiring-team readers fall into candidate-service paths from this page

FAQ

What do Web3 interviews actually measure beyond technical answers?

The best ones measure reasoning quality, ambiguity handling, trade-off judgment, debugging maturity, and whether the candidate can make their work legible under pressure. They should not be treated as pure trivia checks.

Why do strong blockchain candidates still fail interviews?

Often because the panel misreads slow thinking as weak thinking, or because the candidate has done real work but cannot explain ownership, decisions, and constraints clearly enough in the room.

How should hiring teams calibrate blockchain interview signals across different interviewers?

They should agree in advance on what each round is supposed to detect, what counts as strong evidence, and which impressions need external proof before affecting shortlist decisions.

What is the difference between Web3 interview prep and interview calibration?

Interview prep helps candidates perform better. Interview calibration helps teams and candidates interpret performance more accurately. This page is about interpretation, not generic preparation.

How do recruiters verify whether blockchain interview performance reflects real experience?

They should compare interview claims against artifacts such as portfolio pieces, readable GitHub work, architecture explanations, debugging trails, scoped ownership examples, and role-aligned project evidence.

What counts as a strong interview signal for smart contract roles?

Clear trade-off reasoning, ability to explain execution behavior, debugging maturity, security awareness in context, and answers that stay coherent when follow-up questions test depth rather than memorization.

Can better job descriptions improve interview quality in Web3 hiring?

Yes. When ownership, proof expectations, and role scope are clearer upfront, screening improves, and interviews become less noisy. That is why JD framing sits upstream of interview calibration.

Internal navigation block

Parent hiring-signal cluster

Web3 Hiring Signals | ArtofBlockchain

Role-Specific Hiring Playbooks | ArtofBlockchain

Interview and role-prep hubs

Smart Contract Developer Career Hub: Skills, Proof, Interview Prep and Jobs | ArtofBlockchain

Job Search & Web3 Career Navigation Hub | ArtofBlockchain

Proof and articulation layer

The Smart Contract Portfolio That Shows How You Think | ArtofBlockchain

Screening and hiring-quality layer

Recruiters: how do you verify real blockchain experience before the interview? | ArtofBlockchain

Blockchain Job Description Review Service for Web3 Hiring Teams | ArtofBlockchain

Closing CTA

If your interview process keeps producing confusion instead of conviction, the issue may not be candidate quality. It may be weak calibration, weak proof visibility, or weak role framing upstream.

Use this hub to sharpen how Web3 interview signals are interpreted, then take the next practical step based on your need.

If you want to improve how the role is framed before screening starts:

Blockchain JD Review

Blockchain Job Description Review Service for Web3 Hiring Teams | ArtofBlockchain